What Can We Expect from Artificial Intelligence in 2026?

Key Points

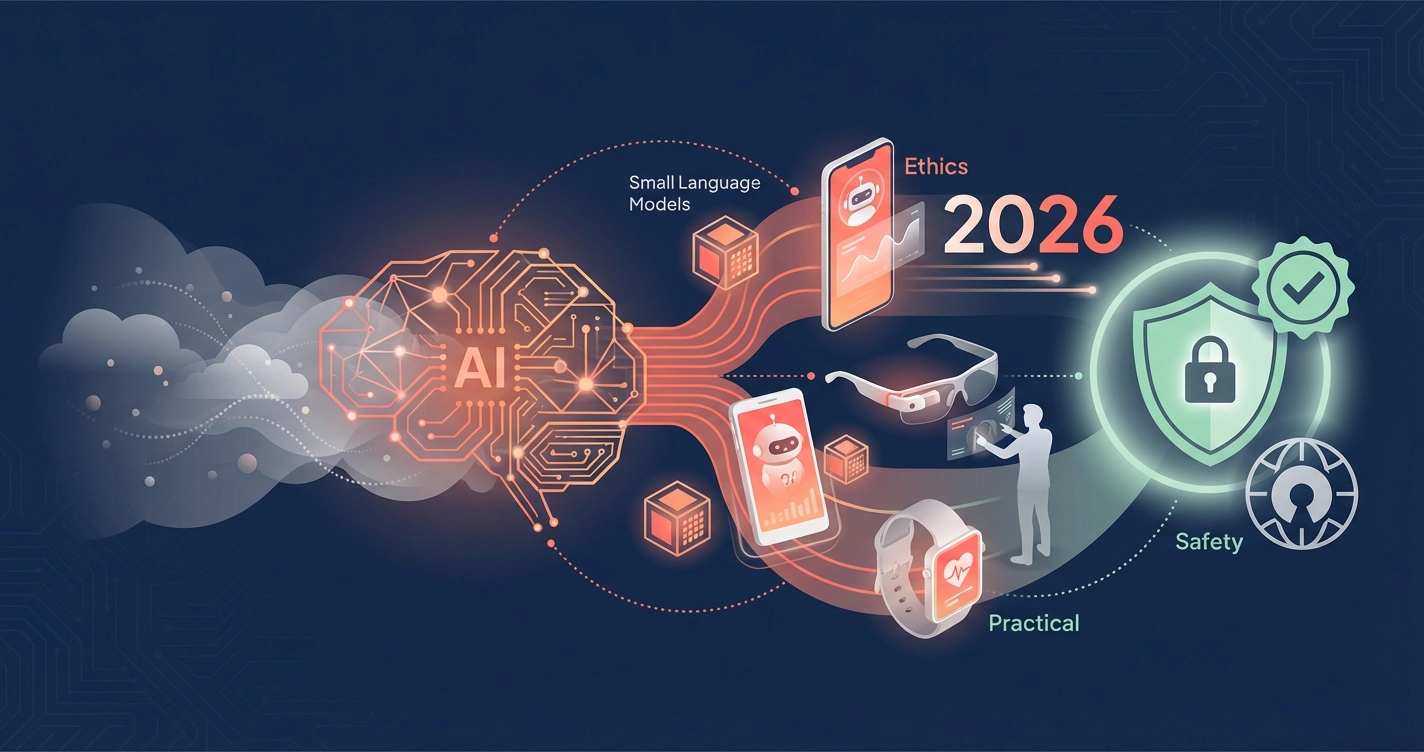

- What can we expect from Artificial Intelligence in 2026 centers on practical applications rather than flashy demonstrations, with a focus on smaller, specialized models

- Regulatory battles will intensify as governments worldwide grapple with AI governance and safety frameworks

- Physical AI integration through wearables and robotics will accelerate, raising new privacy and security considerations

- Chinese open-source models are reshaping the global AI landscape, creating new trust and transparency questions

- AI augmentation tools will become commonplace, though with important implications for data privacy and responsible use

Background

The artificial intelligence industry has reached a critical inflection point. After years dominated by ever-larger language models and bold promises, the focus is shifting toward making AI systems that are practical, safe, and genuinely useful in everyday contexts. This transition reflects a maturing industry that’s beginning to grapple seriously with the real-world implications of deploying AI at scale.

According to TechCrunch, published January 2, 2026, experts describe 2026 as a year of transition—”one that evolves from brute-force scaling to researching new architectures, from flashy demos to targeted deployments, and from agents that promise autonomy to ones that actually augment how people work.”

What Happened

Multiple technology publications have released comprehensive analyses of emerging AI trends for 2026, revealing a consistent theme: artificial intelligence is entering a decisive phase defined by practical deployment rather than speculative breakthroughs.

MIT Technology Review reported on January 5, 2026, that the industry is witnessing five major shifts: increased adoption of Chinese open-source models, intensifying regulatory battles, AI-powered shopping experiences, potential scientific discoveries through LLMs, and complex legal challenges surrounding AI liability.

The shift toward smaller, specialized models represents a significant departure from the “bigger is better” philosophy that dominated recent years. Andy Markus, AT&T’s chief data officer, told TechCrunch that fine-tuned small language models (SLMs) “will be the big trend and become a staple used by mature AI enterprises in 2026, as the cost and performance advantages will drive usage over out-of-the-box LLMs.”

Why It Matters

This evolution toward practical AI applications carries profound implications for safety, privacy, and ethical AI development. As AI systems move from controlled environments into everyday devices and workflows, the risks and responsibilities multiply.

The emergence of physical AI—from wearables to robotics—introduces new privacy concerns. Smart glasses and health tracking devices now perform continuous, on-body inference, raising critical questions about data collection, consent, and surveillance. From an ethics perspective, users need clear information about what data these devices collect and how it’s processed.

Chinese open-source models gaining prominence globally also present trust challenges. While openness can enhance transparency and safety through community scrutiny, it also requires users to understand the provenance of the models they’re using and potential security implications.

The regulatory landscape adds another layer of complexity. President Trump’s December executive order attempting to override state AI laws has created uncertainty. States like California are pushing back, fighting to maintain their AI safety requirements. This fragmentation makes it difficult for users to understand their rights and protections.

What’s Next

Looking ahead through 2026, several key developments will shape responsible AI adoption:

Agentic workflows will become mainstream as standardization efforts like Anthropic’s Model Context Protocol (MCP) reduce friction in connecting AI systems to real-world tools. This means users should prepare to vet AI agents carefully before granting access to sensitive systems or data.

Legal precedents will emerge from high-profile cases, including a November trial where a family will bring OpenAI to court over claims its chatbot helped plan a teen’s suicide. These cases will help define AI companies’ liability for harmful outputs.

AI augmentation will become the dominant narrative over automation. Kian Katanforoosh, CEO of Workera, predicts that “2026 will be the year of the humans,” with companies realizing that AI works best alongside human judgment rather than replacing it entirely. This shift should create new roles focused on AI governance, transparency, safety, and data management.

Deep Details

The technical evolution deserves attention from a safety perspective. Many researchers believe the AI industry is “beginning to exhaust the limits of scaling laws,” as TechCrunch reported. This could mean a transition back to an age of research-focused innovation rather than simply making models bigger—potentially creating new categories of risks that haven’t been thoroughly studied.

World models—AI systems that learn how things move and interact in 3D spaces—represent another frontier with safety implications. While promising for applications like robotics and autonomous vehicles, these systems require robust safety testing before widespread deployment.

The energy demands of AI also raise sustainability concerns. Meta’s January 9, 2026 announcement of securing 6.6 gigawatts of nuclear energy for its AI data center highlights the massive power requirements driving AI development.

Source Attribution

- TechCrunch—Published January 2, 2026

- Original article: https://techcrunch.com/2026/01/02/in-2026-ai-will-move-from-hype-to-pragmatism/

- MIT Technology Review—Published January 5, 2026

- Original article: https://www.technologyreview.com/2026/01/05/1130662/whats-next-for-ai-in-2026/

About the Author

This article was written by Nadia Chen, an expert in AI ethics and digital safety who helps non-technical users understand how to use artificial intelligence responsibly. Nadia focuses on making complex AI safety concepts accessible while encouraging informed and secure AI adoption.